MFormats SDK: a new audio/video API for Windows developers

MFormats SDK is our low-level API for the multimedia developer. We believe it fits all kinds of generic or specialised audio and video tasks in areas like broadcasting, security, medicine, sports, education, design, etc.

About MFormats SDK

MFormats is a full-featured replacement for Gstreamer, DirectShow and Media Foundation — both are, in our opinion, overcomplicated yet insufficient to address the video developer’s needs. You are forced to initialise, configure and operate a number of “helping” structures instead of focusing on the app itself.

Coding with MFormats requires only a fraction of the effort — we made several iterations in order to design it in the most simple and straightforward way. With MFormats we are changing the paradigm of data access: from the traditional play-pause-rewind-fast forward philosophy to the principle of random access memory (commonly available in computing today). This has become possible with the evolution of computer hardware.

It may be important to mention that we have developed MFormats with all of the requirements of our own MPlatform SDK in mind — so that it would eventually become its underlying framework. MPlatform has proved to be the best high-level SDK for broadcast applications — its only problem today is that it is too complex for simple tasks like synchronised video transitions on 3 streams.

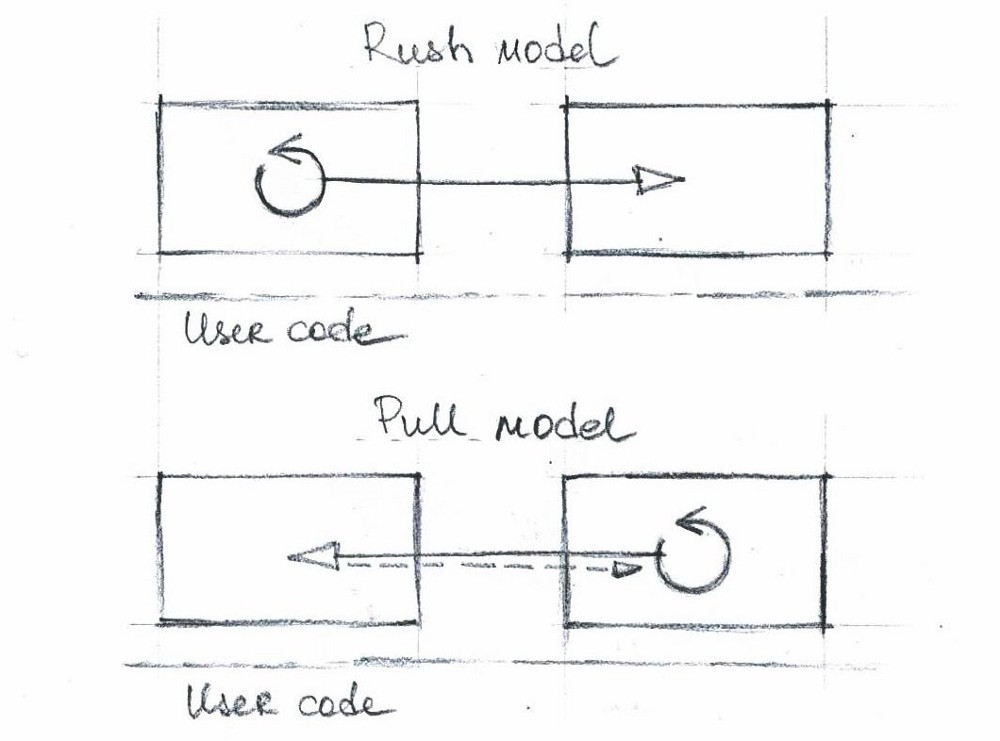

The push and pull models

The big idea behind DirectShow (released in 1996) and GStreamer (released in 2001) is the pipeline, where a set of elements (filters) are connected together into graphs. The data in this model simply flows from the upstream element to the downstream element.

In DirectShow, there are two approaches to controlling this process:

- In the push model (used by default) a process in the upstream filter initiates the data flow, determines what data to send, and pushes it to the downstream filter.

- In the pull model (such as in the IAsyncReader) the downstream filter requests data from the upstream filter. Samples still travel downstream, but a process in the downstream filter controls the data flow.

In both of these approaches, the data flow happens outside of the user code — in a set of black boxes connected to each other. In a certain way, it is not you who controls the data flow — the data flow itself directly influences the way you should write your code.

For instance, if you were to develop a filter, you’d have to take into account that every filter receives instructions from two sides: the Filter Graph Manager and the developer of the user code.

If you were to develop and end-user app in DirectShow, your control of the data flow would be limited. Because of the complexity of the architecture and its dynamic nature, you never have access to the true state of things and don’t have direct access to data (like pulling out an exact frame). DirectShow lacks some sort of precision, it lags in certain ways — and this is exactly what we’ve addressed with MFormats SDK.

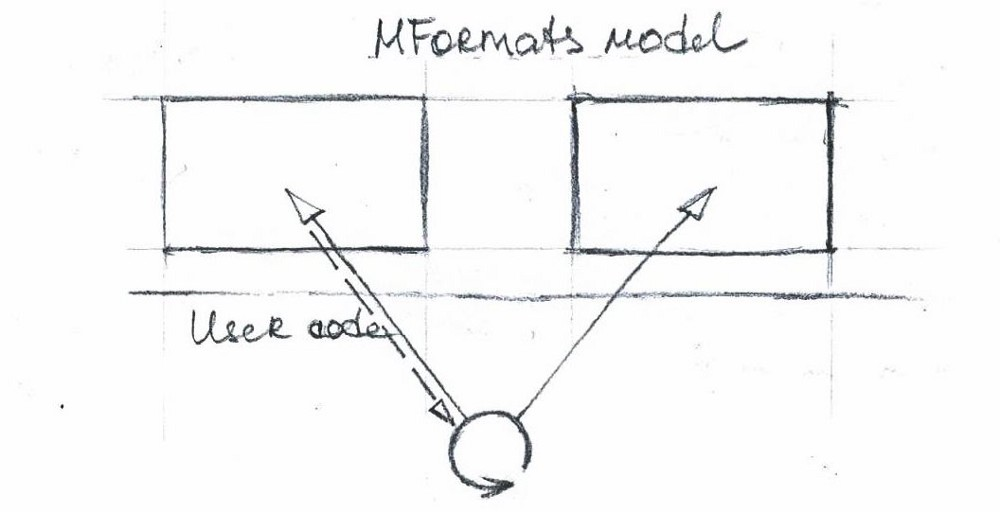

The MFormats model

The limitations and programming requirements of DirectShow have always been a pain for us — we needed more control. So we created an approach where the developer designs his own pipeline if he needs to and came up with a set of simple tools (objects with clean APIs) that make it possible.

In MFormats the data flow looks something like this:

In MFormats nothing happens unless explicitly instructed by you: the process that controls the data flow is in the user’s code. Using standard loops and expressions of his preferred programming language (C#, Delphi, VB.NET, C++), the developer builds his own data flow and maintains full control over it.

The data itself (i.e. video frames) also flows through the user’s code — the developer knows exactly how many frames his code has processed and he has access to the frames themselves.

The structure of MFormats SDK

MFormats SDK consists of several objects with clean, simple interfaces:

- MFReader — to read files and network streams;

- MFLive — to read from devices;

- MFRenderer — to render video to external devices and to the screen;

- MFWriter — to write files;

- MFFrame — to work with video frames.

So, files, network streams, live sources, frames — that’s it!

The all-mighty MFFrame

MFFrame, our container object for audio/video data, is designed to perform various operations with the video frame itself. It has the following features:

- MFFrame always stores the video sample together with the audio samples that match it — because of this audio and video are always in sync. This makes all conversations about audio/video synchronisation mostly irrelevant.

- MFFrame knows the frame’s format info (video size, frame rate, etc.).

- MFFrame always carries the original encoded frame — this will be very handy for transcoding and transmuxing use cases.

- MFFrame objects can be shared between processes. This helps to achieve stability — such as when you work with network streams it makes sense to decode the network stream in a separate process to make sure the instability of the stream does not affect the rest of your application. The other benefit is flexibility in development (building your application’s structure) — you’d probably want your playout and video logging processes to run in separate processes.

- When MFFrame objects are shared between processes, there’s no memory copy. The data in fact is stored in a shared memspace. Because of this, killing a process won’t kill MFFrame until all the processes that link to it are gone.

When you receive an MFFrame object in your own code, it becomes possible to make all kinds of modifications to the video frames. These are the things you can do with each frame using the build-in features:

- scaling, resizing, cropping and cutting;

- frame rate conversion;

- audio level;

- audio and video mixing (overlaying of one video frame on top of another);

- video transitions;

- image overlay (with alpha channel support).

So, virtually all things needed can be done with the help of one object (the same that stores the video frames) — no external objects or filters needed!

Any questions? Feel free to reach out!